Near year ago i configured zoneminder for monitoring my approach. But i got one problem, host that running zoneminder placed in same approach, so, if it will be stolen, it will be stolen with recordings. I spend a lot of time while choosing solution to organize replication on remote server. Most solution that i found work in “packet” mode, they start replication once in NN sec or after accumulating a certain number of events and usually use rsync. Rsync allow to reduce traffic usage, but increase time between event when new frame will be wrote on disk and event when frame will be wrote on remote side. In this situation every second counts, so I thought that solutions like “unison”, “inosync”, “csync2”, “lsyncd” not applicable.

Near year ago i configured zoneminder for monitoring my approach. But i got one problem, host that running zoneminder placed in same approach, so, if it will be stolen, it will be stolen with recordings. I spend a lot of time while choosing solution to organize replication on remote server. Most solution that i found work in “packet” mode, they start replication once in NN sec or after accumulating a certain number of events and usually use rsync. Rsync allow to reduce traffic usage, but increase time between event when new frame will be wrote on disk and event when frame will be wrote on remote side. In this situation every second counts, so I thought that solutions like “unison”, “inosync”, “csync2”, “lsyncd” not applicable.

I tried to use drbd, but get few problems. First – i do not have separate partition on host and can not shrink existing partitions to get new. I tried it with loopback devices, but drbd have deadlock bug when used with loopback. This solution work near two days after deadlock occurs, you can not read content of mounted fs or unmount it and only one solution to fix it that i found – reboot. Second problem – “split brain” situation, when master host restart. I did not spent time to found solution because all ready had first problem. May be it can be fixed with split-brain handlers script or with “heartbeat”. Drbd has great write speed in asynchronous mode and has small overhead, so, may be in another situation i will choose drbd.

Next what i tried was “glusterfs”, first i tried glusterfs 3.0. It was easily to configure it, and glusterfs was worked, but very slow. For good performance glusterfs need short latency between hosts. It useful for local networks but completely useless for hosts connected over slow links. Also glusterfs 3.0 did not have asynchronous write like in drbd. I temporarily thrown searching for solutions, but after a while in release notes for glusterfs 3.2 i found that gluster got “geo-replication“. First i think that this it what i need and before has understood (see conclusion) how it work i started to configure it.

If you will have troubles with configuration, installation here you can find manual.

For Debian, first that you need is to add backports repository on host with zonemnider and on hosts where you planned to replicate data:

$ echo 'deb http://backports.debian.org/debian-backports squeeze-backports \

main contrib non-free' >> /etc/apt/sources.list

$ apt-get update

$ apt-get install glusterfs-server |

$ echo 'deb http://backports.debian.org/debian-backports squeeze-backports \

main contrib non-free' >> /etc/apt/sources.list

$ apt-get update

$ apt-get install glusterfs-server

After that you must open ports for gluster on all hosts where you want to use it:

$ iptables -A INPUT -m tcp -p tcp --dport 24007:24047 -j ACCEPT

$ iptables -A INPUT -m tcp -p tcp --dport 111 -j ACCEPT

$ iptables -A INPUT -m udp -p udp --dport 111 -j ACCEPT

$ iptables -A INPUT -m tcp -p tcp --dport 38465:38467 -j ACCEPT |

$ iptables -A INPUT -m tcp -p tcp --dport 24007:24047 -j ACCEPT

$ iptables -A INPUT -m tcp -p tcp --dport 111 -j ACCEPT

$ iptables -A INPUT -m udp -p udp --dport 111 -j ACCEPT

$ iptables -A INPUT -m tcp -p tcp --dport 38465:38467 -j ACCEPT

If you do not planned to use glusterfs over NFS (as i) you can skip last rule.

After ports will opened, gluster installed and service running you need to add gluster peers. For example you run zoneminder on host “zhost” and want to replicate it on host “rhost” (do not forget add hosts in /etc/hosts on both sides). Run “gluster” and add peer (here and below gluster commands must to be executed on master host, i.e. on zhost):

$ gluster

gluster> peer probe rhost |

$ gluster

gluster> peer probe rhost

Let’s check it:

gluster> peer status

Number of Peers: 1

Hostname: rhost

Uuid: 5e95020c-9550-4c8c-bc73-c9a120a9e96e

State: Peer in Cluster (Connected) |

gluster> peer status

Number of Peers: 1

Hostname: rhost

Uuid: 5e95020c-9550-4c8c-bc73-c9a120a9e96e

State: Peer in Cluster (Connected)

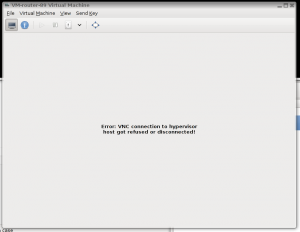

Next necessary to create volumes on both hosts, do not forget to create directories where gluster will save their files. For examples you planned to use “/var/spool/glusterfs”:

gluster> volume create zoneminder transport tcp zhost:/var/spool/glusterfs

Creation of volume zoneminder has been successful. Please start the volume to access data.

gluster> volume create zoneminder_rep transport tcp rhost:/var/spool/glusterfs

Creation of volume zoneminder_rep has been successful. Please start the volume to access data.

gluster> volume start zoneminder

Starting volume zoneminder has been successfu

gluster> volume start zoneminder_rep

Starting volume zoneminder_rep has been successfu |

gluster> volume create zoneminder transport tcp zhost:/var/spool/glusterfs

Creation of volume zoneminder has been successful. Please start the volume to access data.

gluster> volume create zoneminder_rep transport tcp rhost:/var/spool/glusterfs

Creation of volume zoneminder_rep has been successful. Please start the volume to access data.

gluster> volume start zoneminder

Starting volume zoneminder has been successfu

gluster> volume start zoneminder_rep

Starting volume zoneminder_rep has been successfu

After that you must configure geo-replication:

gluster> volume geo-replication zoneminder gluster://rhost:zoneminder_rep start

Starting geo-replication session between zoneminder & gluster://rhost:zoneminder_rep has been successful |

gluster> volume geo-replication zoneminder gluster://rhost:zoneminder_rep start

Starting geo-replication session between zoneminder & gluster://rhost:zoneminder_rep has been successful

And check that it is work:

gluster> volume geo-replication status

MASTER SLAVE STATUS

--------------------------------------------------------------------------------

zoneminder gluster://rhost:zoneminder_rep starting...

gluster> volume geo-replication status

MASTER SLAVE STATUS

--------------------------------------------------------------------------------

zoneminder gluster://rhost:zoneminder_rep OK |

gluster> volume geo-replication status

MASTER SLAVE STATUS

--------------------------------------------------------------------------------

zoneminder gluster://rhost:zoneminder_rep starting...

gluster> volume geo-replication status

MASTER SLAVE STATUS

--------------------------------------------------------------------------------

zoneminder gluster://rhost:zoneminder_rep OK

Also i made next configurations:

gluster> volume geo-replication zoneminder gluster://192.168.107.120:zoneminder_rep config sync-jobs 4

geo-replication config updated successfully

gluster> volume geo-replication zoneminder gluster://192.168.107.120:zoneminder_rep config timeout 120

geo-replication config updated successfully

gluster> volume set zoneminder nfs.disable on

Set volume successful

gluster> volume set zoneminder_rep nfs.disable on

Set volume successful

gluster> volume set zoneminder nfs.export-volumes off

Set volume successful

gluster> volume set zoneminder_rep nfs.export-volumes off

Set volume successful

gluster> volume set zoneminder performance.stat-prefetch off

Set volume successful

gluster> volume set zoneminder_rep performance.stat-prefetch off

Set volume successful |

gluster> volume geo-replication zoneminder gluster://192.168.107.120:zoneminder_rep config sync-jobs 4

geo-replication config updated successfully

gluster> volume geo-replication zoneminder gluster://192.168.107.120:zoneminder_rep config timeout 120

geo-replication config updated successfully

gluster> volume set zoneminder nfs.disable on

Set volume successful

gluster> volume set zoneminder_rep nfs.disable on

Set volume successful

gluster> volume set zoneminder nfs.export-volumes off

Set volume successful

gluster> volume set zoneminder_rep nfs.export-volumes off

Set volume successful

gluster> volume set zoneminder performance.stat-prefetch off

Set volume successful

gluster> volume set zoneminder_rep performance.stat-prefetch off

Set volume successful

I disabled nfs because i did not planned to use gluster volumes over nfs, also i disabled prefetch because they produce next error on geo-ip modules:

E [stat-prefetch.c:695:sp_remove_caches_from_all_fds_opened] (-->/usr/lib/glusterfs/3.2.7/xlator/mount/fuse.so(fuse_setattr_resume+0x1b0) [0x7f2006ba8ed0] (-->/usr/lib/glusterfs/3.2.7/xlator/debug/io-stats.so

(io_stats_setattr+0x14f) [0x7f200467db8f] (-->/usr/lib/glusterfs/3.2.7/xlator/performance/stat-prefetch.so(sp_setattr+0x7c) [0x7f200489c99c]))) 0-zoneminder_rep-stat-prefetch: invalid argument: inode |

E [stat-prefetch.c:695:sp_remove_caches_from_all_fds_opened] (-->/usr/lib/glusterfs/3.2.7/xlator/mount/fuse.so(fuse_setattr_resume+0x1b0) [0x7f2006ba8ed0] (-->/usr/lib/glusterfs/3.2.7/xlator/debug/io-stats.so

(io_stats_setattr+0x14f) [0x7f200467db8f] (-->/usr/lib/glusterfs/3.2.7/xlator/performance/stat-prefetch.so(sp_setattr+0x7c) [0x7f200489c99c]))) 0-zoneminder_rep-stat-prefetch: invalid argument: inode

In conclusion i must to say, that it is not a solution that i looked for, because as it turned out gluster use rsync for geo-replication. Pros of this solution: it is work and easy to setup. Cons it is use rsync. Also i expected that debian squeeze can not mount gluster volumes in boot sequence, i tried to modify /etc/init.d/mountnfs.sh but without result, so i just add mount command in zoneminder start script.

PS

echo ‘cyclope:zoneminder /var/cache/zoneminder glusterfs defaults 0 0’ >> /etc/fstab

And add ‘mount /var/cache/zoneminder’ in start section of /etc/init.d/zoneminder

PPS

Do not forget to stope zoneminder and copy content of /var/cache/zoneminder on new partition before using it.

Few days ago i needed to split and encode one flac file to mp3. I found few solutions, one of them only split flac file, other encode flac to mp3, but no one do not do it in one run.

Few days ago i needed to split and encode one flac file to mp3. I found few solutions, one of them only split flac file, other encode flac to mp3, but no one do not do it in one run.